h5i context init / trace / commit

Versioned reasoning workspace

Every OBSERVE → THINK → ACT step is stored as a DAG node linked to its code commit in refs/h5i/context. Survives session resets, machine switches, and team handoffs.

h5i hook session-start

Auto context injection

A SessionStart hook injects prior goal, milestones, and last decisions into every new Claude session — no manual restore step needed.

h5i context show --depth 1|2|3

Progressive disclosure

Pay only for the depth you need: depth 1 (~800 tokens) gives a compact index; depth 2 adds the timeline; depth 3 includes the full OTA log.

h5i context branch / merge

Reasoning branches

Explore a risky alternative without polluting the main thread — exactly like git branch. Merge nodes are recorded in the DAG with two parent IDs.

h5i context restore <sha>

Reasoning time-travel

Every h5i commit auto-snapshots the context workspace. Restore reasoning to any past commit SHA — or diff how it evolved between two commits.

h5i hook stop

Auto checkpoint on stop

A Stop hook summarises recent ACT entries and commits a milestone automatically when Claude stops — the next session always has a clean checkpoint.

h5i memory snapshot / diff

Memory versioning

Snapshots Claude's memory files at every commit in refs/h5i/memory, diffs them across versions, and syncs them to teammates via h5i push.

h5i claims add / list / prune

Content-addressed claims

Record what the agent concluded — each claim pins its evidence as a Merkle hash over the files it depends on. Stays live until any evidence blob changes, then auto-invalidates. Injected into the next session's preamble so the agent skips re-grounding — measured A/B (N=10) shows ~77% fewer cache-read tokens and ~5.6× fewer file reads.

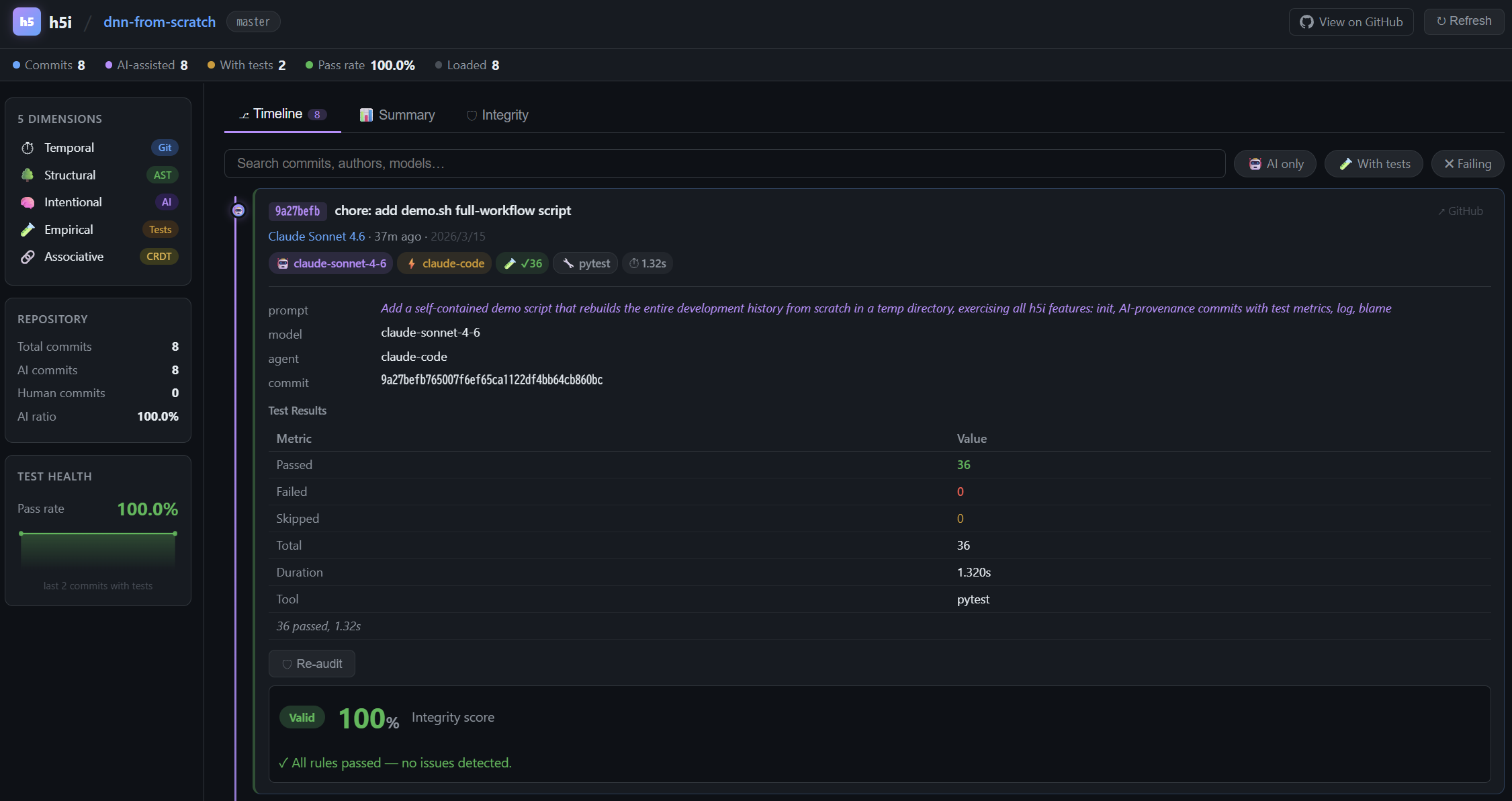

h5i commit --prompt … --audit

AI-tagged commits

Stores the exact prompt, model, agent ID, and test results alongside every diff in refs/h5i/notes. Automatic with hooks installed.

h5i notes footprint / uncertainty

Session analysis

After each session: exploration footprint, uncertainty heatmap (every hedge with confidence score), omissions (stubs, deferrals, broken promises), and blind-edit coverage.

h5i commit --audit

Integrity audit

12 deterministic rules — no AI in the audit path — checking credential leaks, CI/CD tampering, scope creep, and eval() patterns.

h5i context scan

Injection detection

Scans every OBSERVE/THINK/ACT entry for prompt-injection signals — instruction overrides, role hijacks, credential exfiltration — and reports a 0.0–1.0 risk score.

h5i resume

Session handoff

Ready-to-paste briefing — goal, progress, risky files, suggested opening prompt — generated entirely from local data. No API call.

h5i push / pull

Team sharing

Syncs all h5i refs (notes · context · memory) to/from the remote in one command — teammates see full provenance and reasoning history.

h5i serve

Web dashboard

Browser UI with Timeline, Summary, Integrity, Intent Graph, Memory, and Sessions tabs at localhost:7150.